Foundation models suck at details because they have average of humanity’s knowledge. A person has gizillion instances of memory about an specific situation. This is the spectacle making fun of humanity.

Reading Stephen Wolfram’s SMP memories (although I had read it many years ago) is strange on how much the story feels like Deja’Vu…

Remember: The Mind Kernel is the engine, but it works on a source code that is the Mind Graph and Toolbox Theory.

With everything in place, I just have to figure out what exactly is the Attention and Focus in the Mind Graph? My theory is to assume you have the toolkit (which you do) and it can be abstractly seen, with Attention and Focus it jumps right into the hardware of the brain, that is not my realm. But if the physics of the graph theories explains it, it would be magnificent.

I guess I’m doing something funny here. I’m doing what Earth was to do in the The Ultimate Hitchhiker's Guide To The Galaxy (Douglas Adams). I’m finding a prompt :)))) If my work gets refined nicely, i’ll arrive at a big immense prompt, and then the AI will catch up and I will give it to many agents to go devise the experiments and compute the math of it and the rest of the things…

I used to believe what we do in your university and youth is “play”. Now I have grown up and seen many different companies, essentially my Kary Foundation was decades ahead of all of them. Maybe that was all serious

Before the previous war I wished to have touched a piston-filler pen. Now I have five.

Engineering makes people’s standards so high and that is why they get crippled in action.

I used to play piano in improvise mode—I still do; my improvisations are not good. If you listen to them you will have a hard time. I started to do this when I had no music education and my parents had just bought a Piano. I would sit on the chair, open the Piano and try to figure out the music I had heard. I had success in figuring out the right hand of the Turkish March—I had my Dad’s ear to correct me. But more than that I had less luck. So I began exploring patterns on the instrument and figured out some music, basic compositions like a two year old exploring their first pen. (something bad now that I think; they might suffocate…). I had always felt that my creations were ill and problematic. There is something about the early days of any art form where your creations feel wrong and you can’t understand why exactly. This was happening to me.

More the time passed; I learned about the patterns and in my own language today “collected tools” for my toolkit. [a custom notation which seems to suggest a smiley face] My compositions still suffered the same problem. They were by all means horrible.

Years later; I was watching this Rick Beato course on playing improvised Jazz and he said something like: Never let your hands on the piano and just play; you will play random things that mean nothing. It will only produce noise and your brain will learn to do it. It was shocking to learn, others had my problem too…

Just as I was getting horrified of all these; something even worse and interesting happened; There was this funny thing that people would let a keyboard suggest the next word and then keep accepting the next word till it made a full sentence. They had no idea LLMs were coming. These sentences were just like the piano thing: they were real brain rot. horrible. And I also learned this technique where you would sit behind the keyboard and type anything that had came to your mind. The same observation there as well.

I’m not sure exactly why I am mentioning these stories exactly; to my brain these are immensely related to the things I’m going to tell you about, but they gave seed at least; I always wondered: what is good creation and why does that happens to you? when the LLMS came; the suggestions of bullshit generator became real insights. And that was the Attention Mechanism. Rick Beato’s tip for not making crap was also paying attention. (How Ironic; for he could win the noble prize of physics if he knew what he had discovered. Joking of course)

It took me decades and working across all creative mediums I had found and explored to see that everything is made of Elements. Even the mind and certainly all forms of creation. Once you see it, it becomes such a basic and obvious thing that you forget how much thinking it required to arrive at them. And when you do get there It becomes about shaping the bigger meanings.

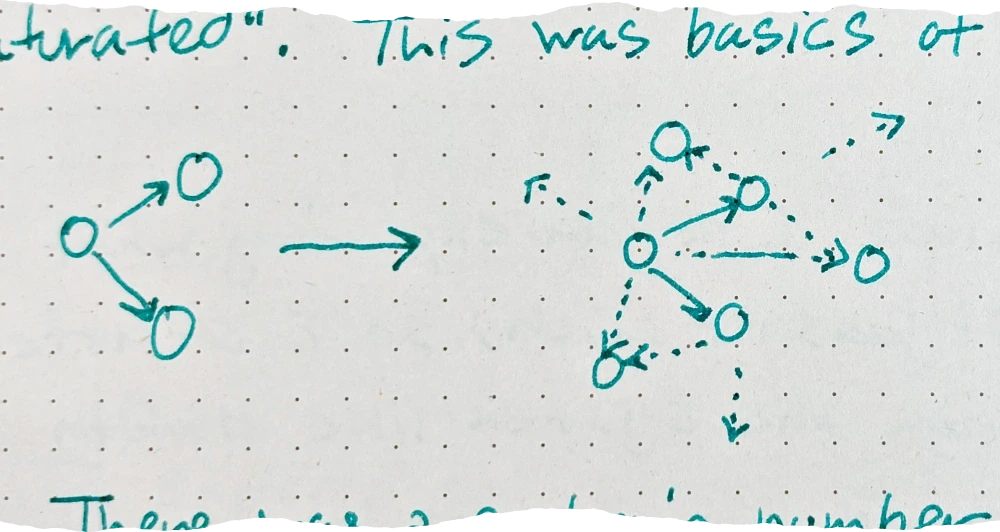

My intuition here also sees the world through the lens of LEGO, Programming, and systems in general. In LEGO everything is made of smaller things, to a point where you reach the bricks, “atoms”. In programming; all software are made of the language features; all are made of functions and each function of smaller functions. It goes on. The interesting thing is that all creation—intellectual or physical—are made of graphs of definitions. A program consists of many functions that call each other in graphs. It is possible to call function “D” from “C” and “B” and call these functions both from the function “A”. So it is a graph.

I’m sure that is not a problem for you; but you may say: “How can a LEGO be a graph?” it is a tree structure; how on earth a physical tree structure can be a graph?

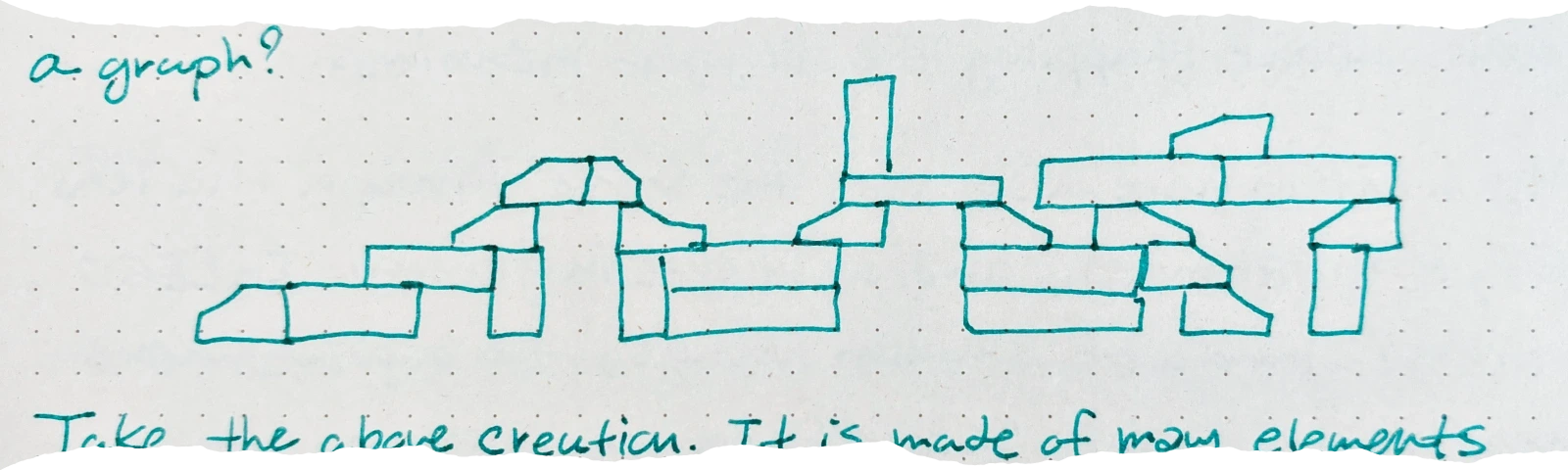

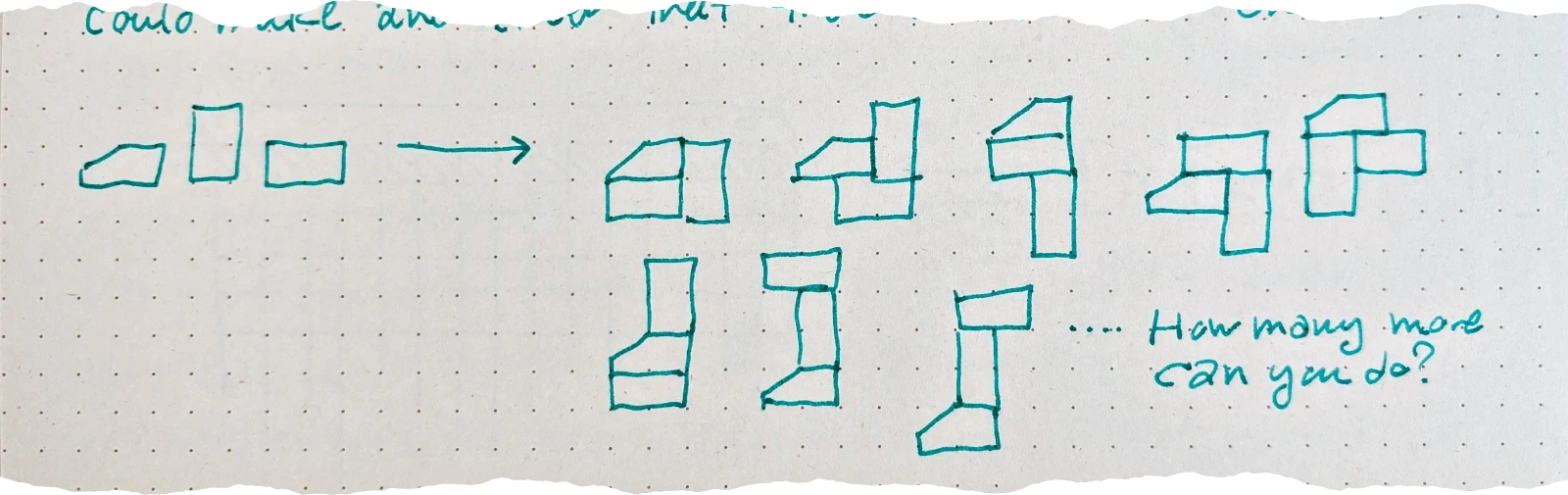

Take the above creation. It is made of many elements connected together in ways that are tree-structured. It, by definition, is a perfect tree.

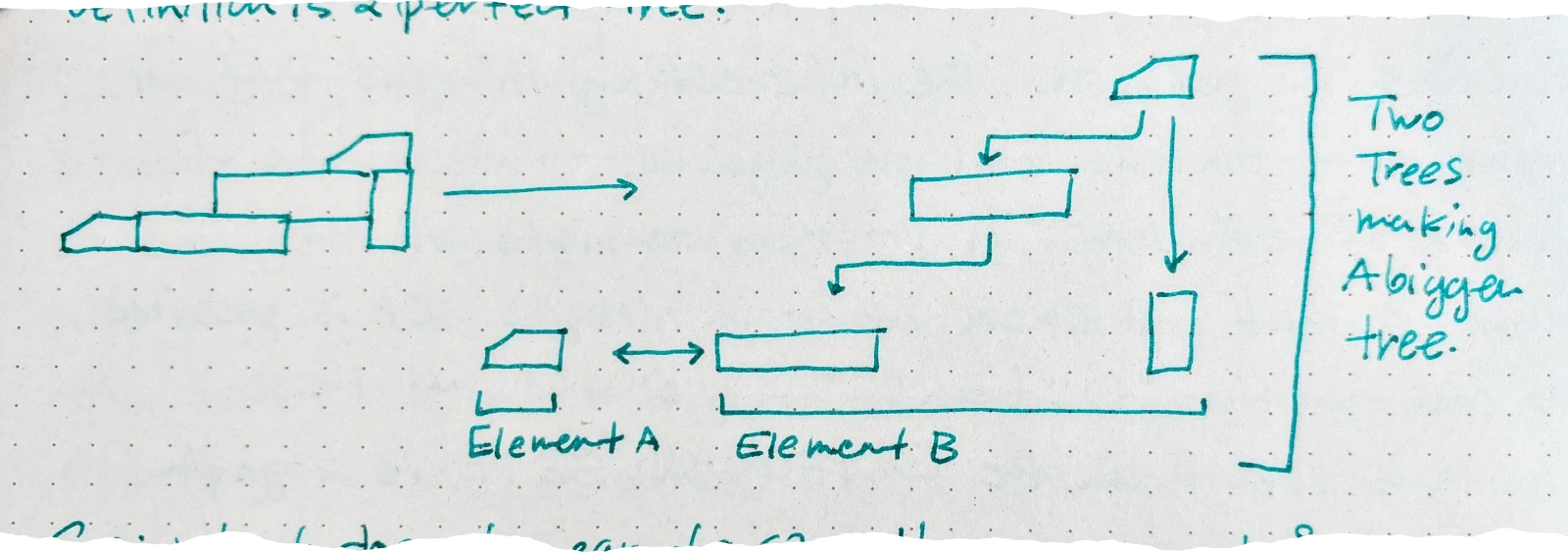

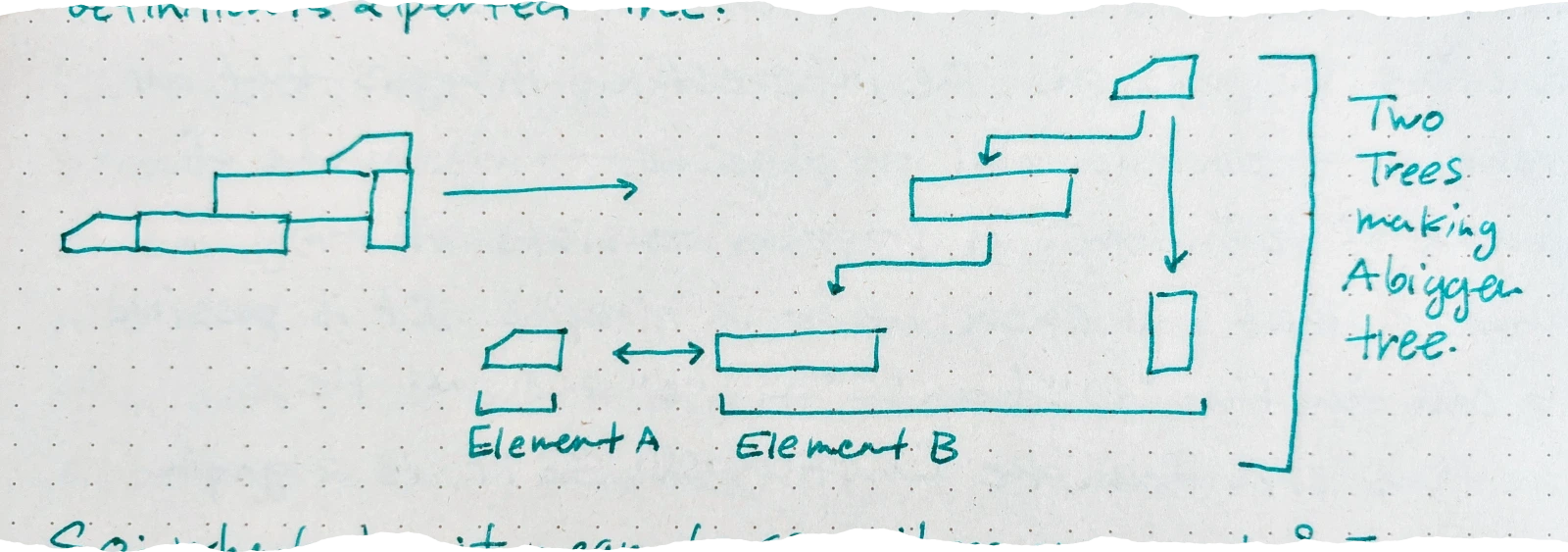

So; what does it mean to say these are graphs? The elements in the software ran on different times; physically they were different; but their blueprint; the function definitions were all defined in one place. The bricks in the LEGO world are not the same physically; but they are all “copies” of a world of unique blueprints:

And therefore a graph:

My understanding of the world was also enhanced when I created Arendelle Language. It wasn’t anything like making other programming languages; it was making one that never existed before; to do something that never existed before; by a person who knew nothing about programming languages and it was essentially his first real and big project.

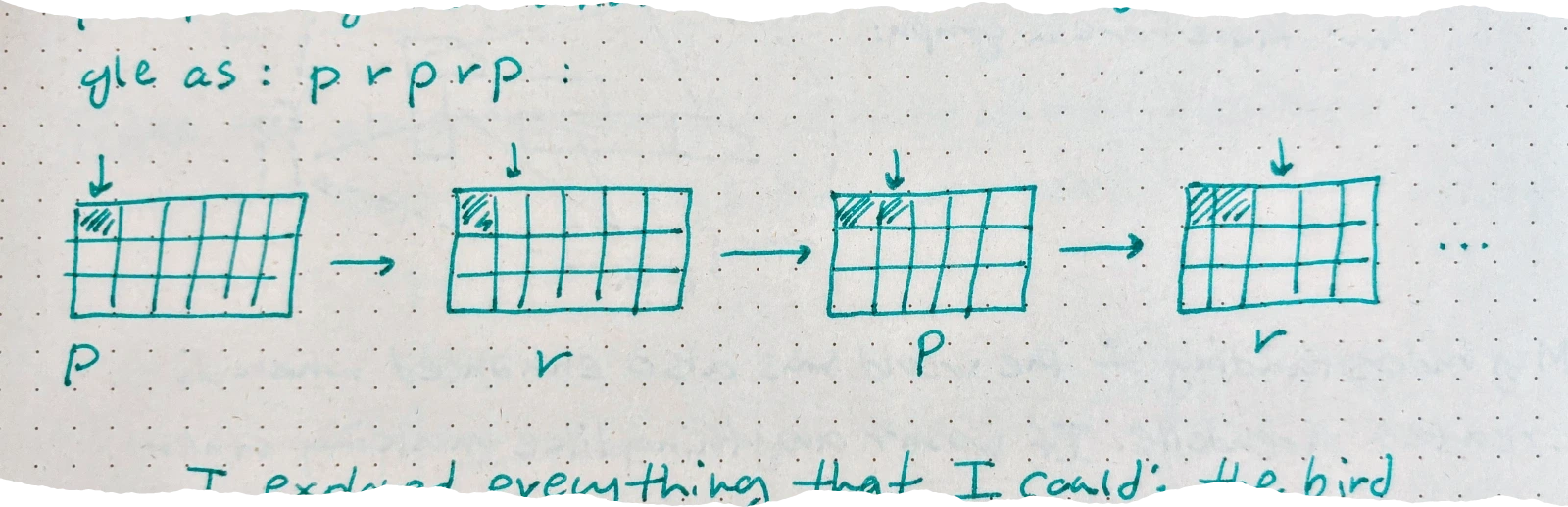

So what would I do encountering Arendelle Language? I would create new features (and I had to “invent” all new features as I had no internet in the school, and little English and almost no computer understanding. My features there were like my theories here; built on my own bricks. And so in Arendelle Language; I would first create something and then spend days; sometimes weeks playing with it, with the combinations of things and so on. At first I had made five operations: r l d t p: right, left, down, top; paint. So you could have created a three grid pixel rectangle as: p r p r p:

I explored everything that I could; the bird logo of the Arendelle Language was the first; many icons were next and then was the Q-bert (Kary 18⟡71). I had to implement a command to change colors as well. For weeks I explored the possibilities until, in a two-weeks offline living at school without internet I finally figured out you can call a function in itself and implemented recursive parsing — which I had no idea what it was till the very latter on. So here I had created my big breakthrough; the “Loop”. You could now have written

![`[17, p r]` -> a drawing of a partial grid being filled in steps with the caption 'x 77 times' and a long horizontal bar being shaded in](/media/scrapped-papers/new-ideas-and-the-graph-saturation-towards-understanding-creativity/6.webp)

Once I had created the loop, I began exploring what you could make with it. It wasn’t much; so I started working on some data from the system like #width and #height that you could put in the loop and have it work by computing the screen size:

![`[#width, p r]` => a drawing of a window interface with a full row shaded in a grid pattern](/media/scrapped-papers/new-ideas-and-the-graph-saturation-towards-understanding-creativit/7.webp)

I tried everything I could with these and then created variables and conditions, explored all my possible ideas and then arrived once again in this position where there was nothing new for me to make with these things; here with Micha we made Arrays and what we called stored spaces and much much more. By each new feature there was new possibilities opened till the possible combinations (that would satisfy us) were “Saturated”. This was basics of sub-set computation.

There was a certain number of combinations you could make and after that there was no new one.

And so I had this journey with Arendelle Language that at some point there was nothing new I could do with it (or Micha or Sina Bakhtiari for that matter). We were all aware of this.

Years had passed and I found myself in a boring and unbearable class in my university about computer storage. You had to memorize many different formulas to compute disk rotation; space; … To know in how many nanoseconds the head will reach a segment of a tape or whatever.

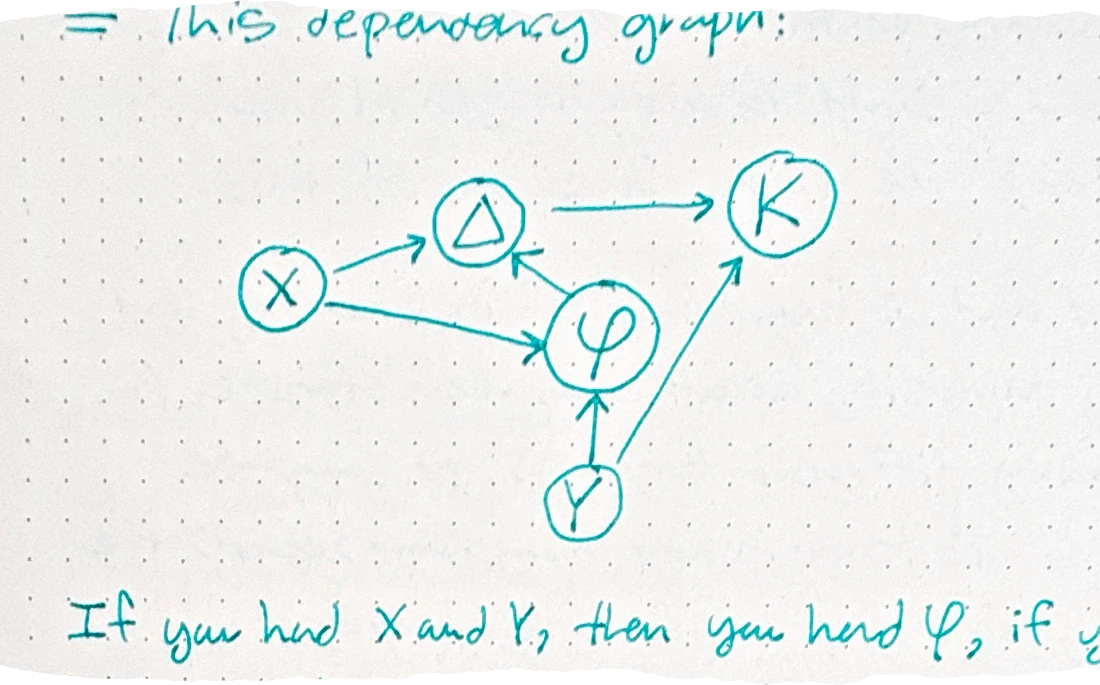

There I had noticed that none of the questions — that I could not solve — were anything but dependency graphs. They would say: You have X, Y, and Z, figure out K. All of these required you knowing that for example; K = Y * Δ, so what is Δ? It is Δ = sin(X/2 * π * φ), what is φ? It is Y = φ / tan(X/8), you had X so you could compute φ and voila!. In other words

| Symbol | Formula | Dependencies |

|---|---|---|

| K | K = Y * Δ | Y, Δ |

| Δ | Δ = sin(X/2 * π * ) | X, φ |

| φ | φ = Y * tan(X/8) | Y, X |

= This dependency graph:

If you had X and Y, then you had φ, if you had X, Y, and φ now you had Δ, once you had X, Y, φ, Δ, you had K and then your graph was saturated.

Alternatively, K could also rely on Σ, and so until you knew Σ there was no way to compute K.

I took this idea and made a simple language and environment that would take a series of formulas and constants, compute the dependency graph and then iterate on it till there was nothing more it could compute. I gave it the equations of the course and then by each question; I would give its knowns as constants and had it compute all the derivable answers, without knowing anything about that course. I aced the exam with 100% score. The system wasn’t as sophisticated as Stephen Wolfram’s rewriting system solving differential equations; but it had proved my point. And the vision for it gave me a new vision, something that I was so eager to make for a long time and saw my “@KaryLanguage” as the way to make it: What if you could scale this; have all the problems of the world defined in it and found the terminal nodes? By fixing those nodes the rest would have been fixed automatically.

This made me go and study Mathematica and SMP, and the rest of Stephen Wolfram’s universe. I had to understand how does the language solves equations? how does it handle symbolic manipulation? how does it compute without evaluating… And so I went to symbolic world, rewriting systems and got lost in programming language theory till I found Chris Granger, Eve Language, and then the new rabbit hole I am inside today. Yet still, I always had believed in this graph dependencies and the saturation of their combinations.

The next chapter was when I tried to think about education in 1285. I had to figure out the “atoms” of the system so that I could redesign the whole education on top of it. And so I was contemplating on how I learned things. Nothing much had happened. But then one day, as it is our ritual with Zea; we woke up and went into the kitchen, we sit in a table that houses our coffee machines, we start our days with cups of caramello nespresso and a strawberry flavored chocolates; and talk till we load. (That is broken because of my ████████ service, but we had it for years). And so, Zea was looking at her drawings inside the Procreate app and I was looking with her, she knows how to paint; but her drawings there were all basic at first, she was just exploring the “Tools” of the app; different brushes, colors, settings. but then the situation changed as she had seen enough tools; her work began to be paintings, and so I had this realization that the world is made of tools and play.

Here the Toolbox Theory got born and it suddenly unified many things together; I had speculated that we are made of a mental “toolkit” that consisted of all mental faculties and external literal tools we knew how to operate. Play was the essential mechanism to explore the possibilities of the tool. Essentially Play was a sandbox where the mind could explore tools without them having any effect on the outside world.

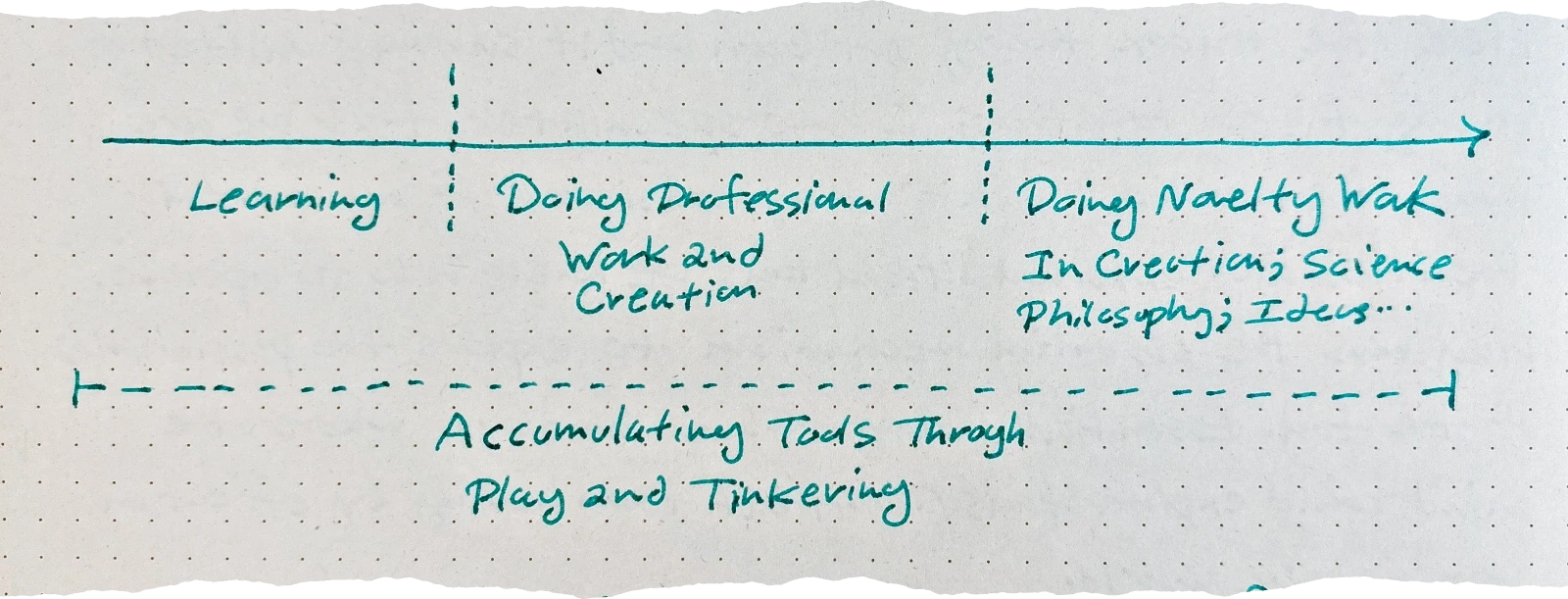

As one had enough tools—the way Zea did in her later drawings—one would have new possibilities of connecting them. And also enough refinements over the details of the drawing or whatever creation they were making that would have rendered them as legitimate “work”, things that others would have loved to see; buy; use… and if we had enough of these tools gathered from enough disparate walks of life; then the combinations would have become really novel; perhaps lab-level work. And so here we go: Unification of “Play, Education, Work, and Lab / Research / Creation of New Tools Back To Society”

And I guess through this lens Lev Vygotsky’s “Zone of Proximal Development” can be seen in a new light. If we assume that the Toolbox Theory is correct; and then integrate my idea of “New Ideas As Combination Of Previous Tools”, we see how creativity itself works: By continually playing with our tools and tinkering with them we are on a never ending loop of combining different tools and sometimes their results are brilliant and amazing; other times random nonsense.

There is a very interesting factor—also—at play here. Our toolboxes are made of whatever we found in life; each one of us has a nearly infinite set of these tools in our mental toolbox. And this the amazing brute force of nature; by each of us having different toolboxes; our creations are also different. I have written about this at length previously at the Duck Protocol: Hidden Mind Kernel of The Human Mind.

In my Archive (Waihona Ike), I have tried my best to gather the context of my day: Quotes I had read; videos I had seen; Books that were open. And the timeline of my work; just so that it could be seen, how each new entry was the combination of all the past in a new form. Archive is basically my attempt to capture a toolbox process. The Minddropss in real life are many many times more. Only I have a hard time recording them. When I am inside of a car, walking, showering, in all of them I find myself constantly “realizing” things. I’m sure others are the same; maybe only I am more aware of them or have cultivated a richer environment for them to appear, as maximizing creativity was the bigger aim of my childhood.

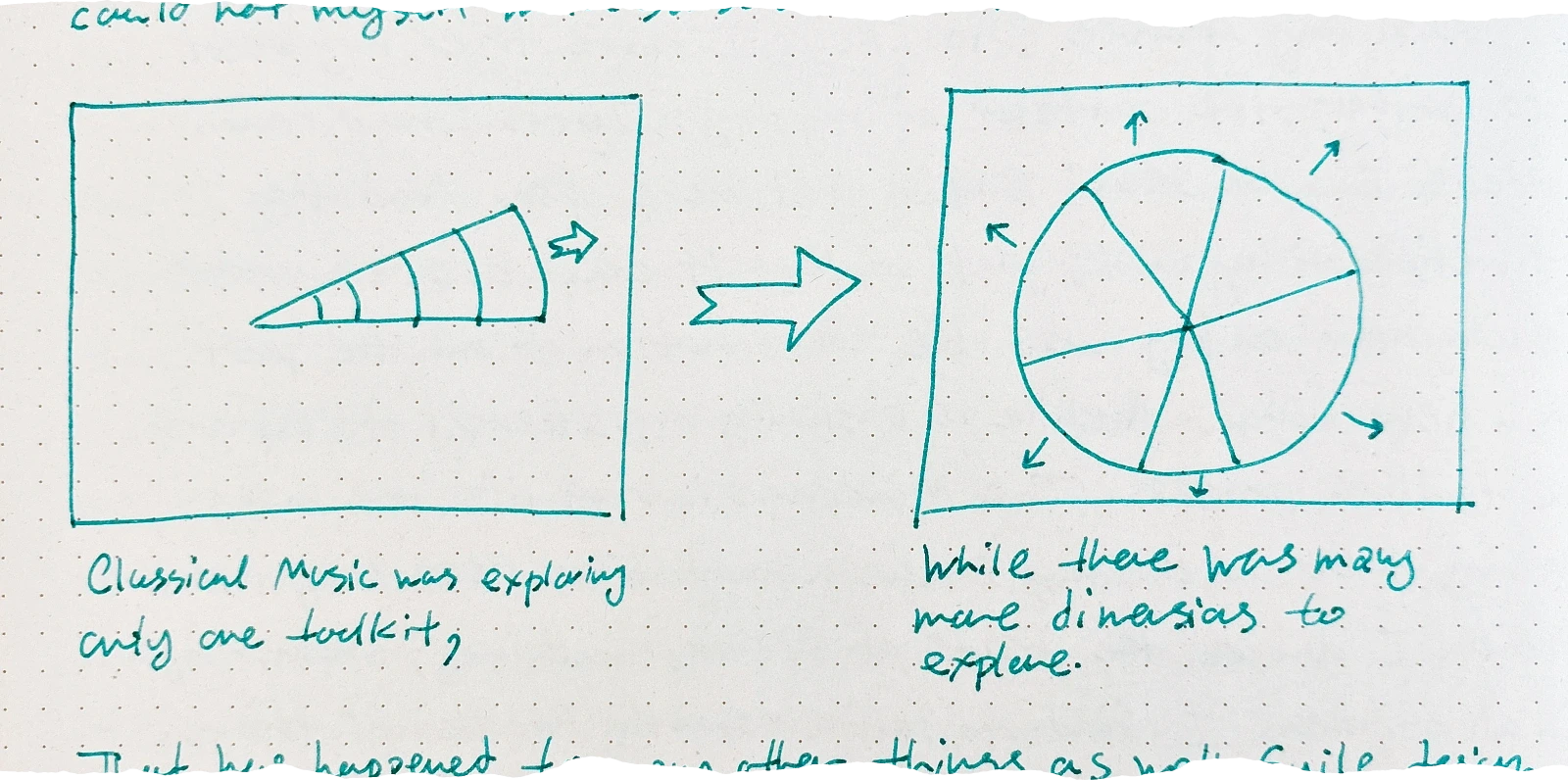

One last final point is to recognize the historical saturations of Cultural Toolboxes. Maybe not actual saturation, but a form of getting tired of categorically the same outputs that might in the bigger picture count for the saturation case. It was evident in my “Classical Diagram” that I could not myself make sense of back then:

That has happened to many other things as well. Guild design to Art Nouveau, to Art Deco, to Bauhaus. We saturate and move on.

And so, here it was a completely different viewpoint.

Perhaps the first faint glimmering of an orientation toward something like Mathematica came when I was about 6 years old—and realized that I could “automate” those tedious addition sums I was being given, by creating an “addition slide rule” out of two rulers. I never liked calculational math, and was never good at it. But starting around the age of 10, I became increasingly interested in physics—and doing physics required doing math.

There were three programs that I found out about—all as it turned out started some 14 years earlier from a single conversation at CERN in 1962: Reduce (written in LISP), Ashmedai (written in Fortran) and Schoonschip (written in CDC 6000 assembler).

Then in the summer of 1977 I discovered the ARPANET, or what’s now the internet. There were only 256 hosts on it back then. And @O 236 went to an open computer at MIT that ran a program called Macsyma—that did algebra, and could be used interactively. I was amazed so few people used it. But it wasn’t long before I was spending most of my days on it. I developed a certain way of working—going back and forth with the machine, trying things out and seeing what happened. And routinely doing weird things like enumerating different algebraic forms for an integral—then just “experimentally” seeing which differentiated correctly.

And so it was that I embarked on what would become SMP (the “Symbolic Manipulation Program”). I had a pretty broad knowledge of other computer languages of the time, both the “ordinary” ALGOL-like procedural ones, and ones like LISP and APL. At first as I sketched out SMP, my designs looked a lot like what I’d seen in those languages. But gradually, as I understood more about how different SMP had to be, I started just trying to invent everything myself.

I remember thinking: “I don’t officially know computer science; I’d better learn it”. So I went to the bookstore, and bought every book I could find on computer science—the whole half shelf of them. And proceeded to read them all.

I put together a little “working group” at Caltech—which for a while included Richard Feynman.

A big early decision was what language SMP should be written in. Macsyma was written in LISP, and lots of people said LISP was the only possibility. But a young physics graduate student named Rob Pike convinced me that C was the “language of the future”

Tini Veltman, author of Schoonschip, and later winner of a physics Nobel Prize, had told me that storing numbers in floating point was one of the best decisions he ever made, because FPUs were so much faster at arithmetic than ALUs.

Before SMP, I’d written lots of code for systems like Macsyma, and I’d realized that something I was always trying to do was to say “if I have an expression that looks like this, I want to transform it into one that looks like this”. So in designing SMP, transformation rules for families of symbolic expressions represented by patterns became one of the central ideas.

My first idea was to go to what would now be called the “technology transfer office” at Caltech, and see if they could help. At the time, the office essentially consisted of one pleasant old chap. But after a few attempts, it became clear he really didn’t know what to do. I asked him how this could be, given that I assumed similar things must come up all the time at Caltech. “Well”, he said, “the thing is that faculty members mostly just go off and start companies themselves, so we never get involved”. “Oh”, I said, “can I do that?”. And he leafed through the bylaws of the university and said: “Software is copyrightable, the university doesn’t claim ownership of copyrights—so, yes, you can”.

And following a practice I’d started with SMP, I wrote documentation as I developed the design. I figured if I couldn’t explain something clearly in documentation, nobody was ever going to understand it, and it probably wasn’t designed right. And once something was in the documentation, we knew both what to implement, and why we were doing it.